1. Executive Summary

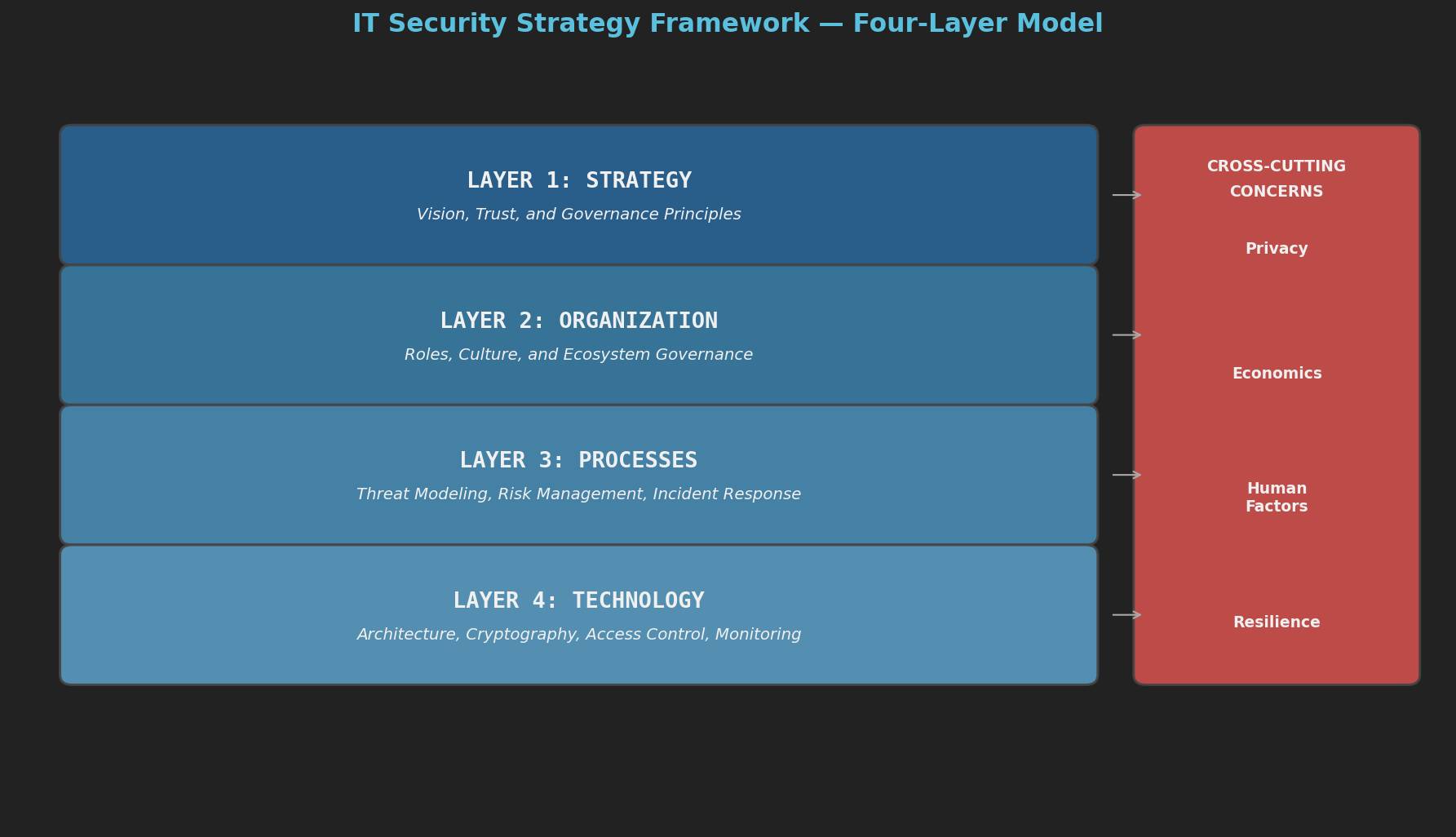

Security is fundamentally a people and economics problem, not merely a technology one. Organizations that treat security as a product to purchase rather than a process to manage inevitably fail. This framework, synthesized from the security literature and practical experience, proposes a four-layer model that moves from the abstract — strategic vision, trust, and governance principles — down to the concrete — technology architectures, cryptographic protocols, and monitoring systems.

Effective cybersecurity risk management requires holistic, systems-level thinking [14]. Individual tools, standards, or processes are insufficient on their own. Only by understanding how threats, controls, people, metrics, and governance interact — and by making those interactions visible — can organizations achieve resilient security postures.

The four layers correspond to four fundamental questions. Layer 1 (Strategy) establishes why we do security: building trust, governing emerging threats, and balancing privacy with defense. Layer 2 (Organization) addresses who does security: aligning incentives, defining roles, and shaping culture. Layer 3 (Processes) specifies what activities to perform: threat modeling, risk management, incident response, and compliance. Layer 4 (Technology) implements the how: access control models, cryptographic foundations, device design principles, and defense in depth.

Cross-cutting concerns — privacy, economics, human factors, and resilience — thread through every layer. A maturity model enables organizations to assess their current position and chart a path forward. For the broader societal context that shapes this landscape — trust, surveillance, regulation, and the economics of insecurity — see the companion post: The Societal Foundations of IT Security.

2. Framework Overview

2.1 Layer Diagram

2.2 Layer Interactions

The layers are not isolated — they form a feedback loop. Strategic vision defines organizational structure and incentive alignment, which in turn determines which processes are feasible. Process requirements dictate technology selection, and technology capabilities and failures feed back into strategy revision. In the reverse direction, organizational friction reveals unrealistic strategy, process gaps expose missing roles, and technology limitations force process adaptation.

This interconnection is why a systems thinking approach is essential [14]. Fragmented, siloed approaches that treat each layer independently will miss the feedback loops, dependencies, and emergent properties that determine whether security actually works. A threat catalog that doesn’t inform control selection, or metrics that don’t connect to risk appetite, or incident response that doesn’t feed lessons learned back into threat modeling — these are symptoms of missing system integration.

3. Layer 1: STRATEGY — Vision, Trust, and Governance Principles

WHY we do security.

3.1 Trust and Security Trade-offs

Society depends on trust, and trust is maintained through four overlapping pressures: moral (personal ethics), reputational (social consequences), institutional (laws and regulations), and security (technical controls) [1]. No single pressure suffices. Effective security strategy deploys all four in combination. Over-reliance on technical security without institutional and reputational backing is both costly and fragile.

For organizations, this means security strategy must go beyond technology. Building a culture of integrity (moral pressure), maintaining a strong security reputation with customers and partners (reputational pressure), complying with and advocating for sound regulation (institutional pressure), and deploying technical controls (security pressure) — all four must be part of the strategy.

3.2 Systems Thinking and Adaptive Governance

Hacking is not limited to computers [2]. A hack is any exploitation of a system’s rules in unintended ways — regulatory arbitrage, process loopholes, social engineering. Any system with rules creates opportunities for rule-exploitation, which means governance must evolve as fast as attackers do. Static rules get gamed; adaptive governance is essential.

Security governance must be a living system. Annual policy reviews are insufficient. Organizations need continuous monitoring of how their rules are being exploited and rapid adaptation mechanisms to close emerging gaps. The framework itself is not a one-time implementation but an evolving system that must adapt as threats, technology, and business objectives change [14].

3.3 Privacy as Strategic Pillar

Privacy is not the opposite of security — it is a prerequisite for it [3]. Data should be treated as a toxic asset: collect only what you need, dispose of it when done. Systems should be designed with data minimization as the default, and data collected for one purpose should not be repurposed without consent.

Privacy is not a compliance checkbox but a design philosophy. It must be embedded at the strategy level and flow through all subsequent layers.

3.4 Resilience over Prevention

As computers become embedded in everything — IoT, cyber-physical systems, smart infrastructure — security failures become safety failures [4]. Organizations should assume breach and design for graceful degradation rather than relying solely on prevention. Ten principles for device security (see Section 6.5) flow from this strategic vision.

3.5 Defining Risk Appetite

Before any tactical work begins, the organization must articulate its risk appetite — how much risk it is willing to accept — and its risk tolerance — the acceptable variation in outcomes [14]. A risk appetite statement is not a vague aspiration; it should be specific enough to drive control selection and investment decisions. For example: “We accept up to 4 hours of downtime per quarter for non-critical systems, but zero undetected data exfiltration from systems handling customer PII.”

Risk appetite flows from the board and executive leadership, through the CISO, into every control decision. Without it, security teams operate without a compass — either over-investing in low-risk areas or under-investing in critical ones.

4. Layer 2: ORGANIZATION — Roles, Culture, and Ecosystem Governance

WHO does security and HOW they are organized.

4.1 Economics of Security

Security is an economic problem before it is a technical one [6, 8]. You cannot buy security; you must continuously practice it. Perfect security is impossible, so the goal is to reduce risk to an acceptable level at acceptable cost. Countermeasures must match the threat, which requires modeling adversaries by their objectives, access, resources, expertise, and risk tolerance. Every added feature is a potential vulnerability, making simplicity itself a security principle.

Security fails when those who could fix a problem lack incentive to do so [8]. Breaches impose costs on third parties who have no control over the insecure system. Cyber insurance can help align incentives by pricing risk appropriately. Organizations often invest in visible but ineffective measures rather than invisible but effective controls — a pattern that must be actively resisted.

Every security system needs four components: a policy (what to enforce), a mechanism (how to enforce it), assurance (proof it works), and incentives (why people comply) [8]. Missing any one of these four pillars leads to failure.

4.2 Architecture-Governance Alignment

Insights from platform ecosystem research map directly to security governance [13]. Security team structure must mirror the systems they protect — a mismatch between organizational boundaries and technical boundaries creates blind spots. Security posture must evolve faster than the threat landscape just to maintain the status quo; standing still means falling behind. There is an inherent tension between central security control and business unit autonomy — too much central control stifles the business, too much autonomy fragments security. Architecture and governance must evolve together; changing one without the other creates gaps. And complex security architectures must be modular, so components can be audited and upgraded independently.

Security governance misaligned with the technical architecture it governs will be circumvented. If the architecture is decentralized but governance is centralized, or vice versa, exploitable gaps emerge.

4.3 Security Planning and Business Continuity

Security must be planned as an ongoing lifecycle, not a one-time project [10]. The cycle begins with risk assessment — identifying assets, threats, vulnerabilities, and their intersections. This feeds into security policy definition, followed by implementation of technical, administrative, and physical controls. Training and awareness come next, because people are both the weakest link and the strongest defense. Monitoring and audit verify that controls work as intended, while incident response prepares for when controls fail. Business continuity planning ensures critical operations survive disruption. Finally, the cycle returns to risk assessment, because the threat landscape has changed.

In cloud environments, security responsibility is divided between provider and customer. With IaaS, the provider handles physical infrastructure and the hypervisor, while the customer is responsible for the OS, applications, data, and identity. PaaS shifts OS and runtime responsibility to the provider, and SaaS shifts application responsibility as well — but the customer always remains responsible for data, identity, and access configuration.

4.4 Organizational Roles and the Three Lines of Defense

Security requires defined roles with clear accountability, organized around the three lines of defense model [14]. The first line is business operations — the teams that own and manage risk day to day. They are responsible for implementing controls and operating securely within their domains. The second line comprises risk management and compliance functions that oversee, challenge, and support the first line. The third line is internal audit, providing independent assurance that the first two lines are working effectively.

Within this structure, the CISO owns overall security posture, risk appetite, and board communication. Security architects design security architecture aligned with business needs. Threat modelers systematically identify and categorize threats. Incident responders detect, contain, and recover from security incidents. A privacy officer ensures compliance with privacy principles. Red teams simulate adversary behavior to test defenses. And security champions, embedded in development teams, bridge the gap between security and engineering [12].

For each security process, it must be clear who is responsible for doing the work, who is accountable for the outcome, who needs to be consulted, and who should be informed [14]. Without these explicit assignments, critical activities fall through the cracks — everyone assumes someone else is handling it.

Culture matters as much as structure. Security must be everyone’s responsibility, not just the security team’s [10]. People must feel safe reporting security concerns without blame [8]. Organizations should design and operate as though the adversary is already inside — the assume-breach mentality [5]. And because the threat landscape evolves continuously, so must the organization’s knowledge [2].

5. Layer 3: PROCESSES — Threat Modeling, Risk Management, and Incident Response

WHAT activities organizations perform.

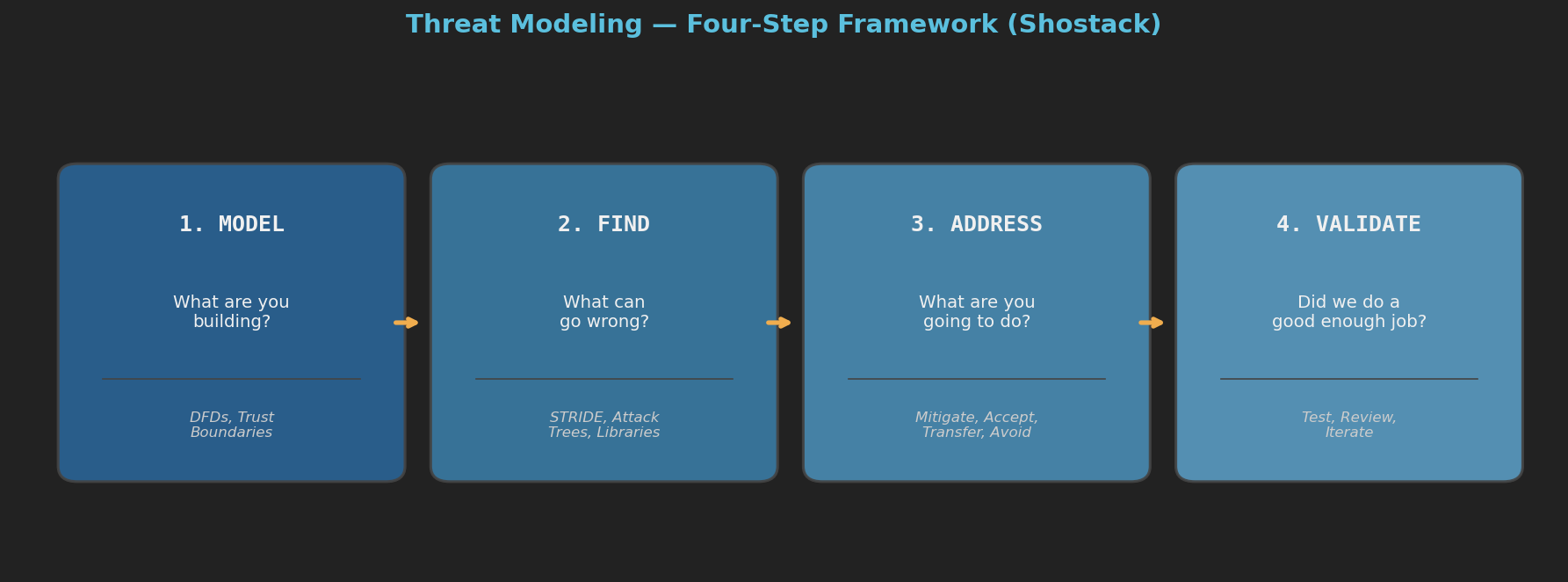

5.1 Threat Modeling: Four-Step Framework

A well-established four-step framework provides a repeatable process for identifying and addressing threats [12].

The process begins by modeling what you are building, using data flow diagrams and trust boundaries. Next, you find what can go wrong, applying the STRIDE framework and attack trees. Then you address each threat by deciding whether to mitigate, accept, transfer, or avoid the risk. Finally, you validate the work — testing, reviewing, and iterating until the analysis is thorough.

STRIDE categorizes threats into six types: Spoofing (pretending to be someone else, violating authentication), Tampering (unauthorized data modification, violating integrity), Repudiation (denying an action was performed, violating non-repudiation), Information disclosure (exposing data to unauthorized parties, violating confidentiality), Denial of service (making a system unavailable), and Elevation of privilege (gaining unauthorized access levels, violating authorization).

STRIDE can be applied per element in a data flow diagram. External entities are susceptible to spoofing and repudiation. Processes are susceptible to all six threat types. Data stores are susceptible to tampering, information disclosure, and denial of service. Data flows are susceptible to tampering, information disclosure, and denial of service.

Attack trees provide a complementary technique. The root node represents the attacker’s goal, child nodes represent methods to achieve it, and leaf nodes represent concrete attack steps. Nodes can be AND (all children required) or OR (any child suffices), and each can be annotated with cost, difficulty, and detectability.

Threat identification must be continuous and informed by threat intelligence [14]. Frameworks like MITRE ATT&CK provide structured catalogs of adversarial tactics and techniques, enabling organizations to map their defenses against known attack patterns rather than guessing.

For privacy-sensitive systems, the LINDDUN framework addresses privacy-specific threats: linkability, identifiability, non-repudiation (when denial should be possible), detectability, disclosure, unawareness, and non-compliance with privacy legislation [12].

5.2 Risk Management as a System

Traditional risk management treats security as a linear chain — a threat agent exploits a vulnerability to affect an asset, and controls reduce the resulting risk. This model is useful for individual risk assessments but dangerously incomplete as an organizational approach. It misses the feedback loops, dependencies, and emergent behaviors that determine whether risk management actually works [14].

A systems view reveals that risk management is not a chain but a web of interacting elements [14]. Threats must be continuously identified and tracked through threat intelligence. When threats materialize, they produce events that detection systems must observe, correlate, and classify as incidents. Events and incidents reveal issues — gaps in controls, processes, or awareness — that must be formally managed through their lifecycle. Controls are the safeguards deployed to reduce risk, but they are only as good as the assessments that evaluate their effectiveness. Assessments produce metrics that quantify performance and risk trends. People operate across every element — as operators, decision-makers, and often as the weakest link. All of this feeds into cybersecurity risk estimation, which drives decisions about where to invest, what to accept, and what to remediate. And overarching enterprise governance sets the risk appetite and strategic direction that shapes every other element.

The critical insight is that these elements form feedback loops. Metrics should inform governance decisions, which change risk appetite, which reshapes control priorities. Incident lessons should flow back into threat modeling and control design. Assessment findings should drive issue remediation, which improves controls, which changes the next round of metrics. When these feedback loops are broken — when incidents don’t improve controls, or metrics don’t reach decision-makers, or governance doesn’t respond to changing risk — the system degrades silently until a major breach exposes the gaps.

The classic CIA triad identifies the three core security properties that controls must protect: confidentiality (information accessible only to authorized parties), integrity (information modified only by authorized parties in authorized ways), and availability (systems and data accessible when needed). Additional properties include authentication, non-repudiation, and privacy.

Risk analysis follows a systematic methodology within this system. First, identify what has value — data, systems, reputation, continuity. Then identify threats using STRIDE, threat intelligence, and historical data. Assess vulnerabilities through scanning, assessment, and review. Estimate the likelihood and impact of each threat-vulnerability pair, calculate risk as likelihood times impact, prioritize by risk score, and select controls — choosing to avoid, transfer, accept, or mitigate each risk.

Risk acceptance deserves special attention [14]. When an organization consciously decides to tolerate a known risk, that decision must be formally documented, approved at the appropriate level, and reviewed periodically. Informal risk acceptance — where everyone knows about a vulnerability but nobody formally owns the decision to leave it — is one of the most common failure modes.

Risk Assessment Template:

| Asset | Threat | Vulnerability | Likelihood (1-5) | Impact (1-5) | Risk Score | Control | Residual Risk |

|---|---|---|---|---|---|---|---|

| Customer DB | SQL injection | Unvalidated input | 4 | 5 | 20 | Input validation + WAF | 4 |

| Email system | Phishing | Untrained staff | 5 | 3 | 15 | Training + MFA | 5 |

| Web server | DDoS | Single point of failure | 3 | 4 | 12 | CDN + rate limiting | 4 |

5.3 Incident Response

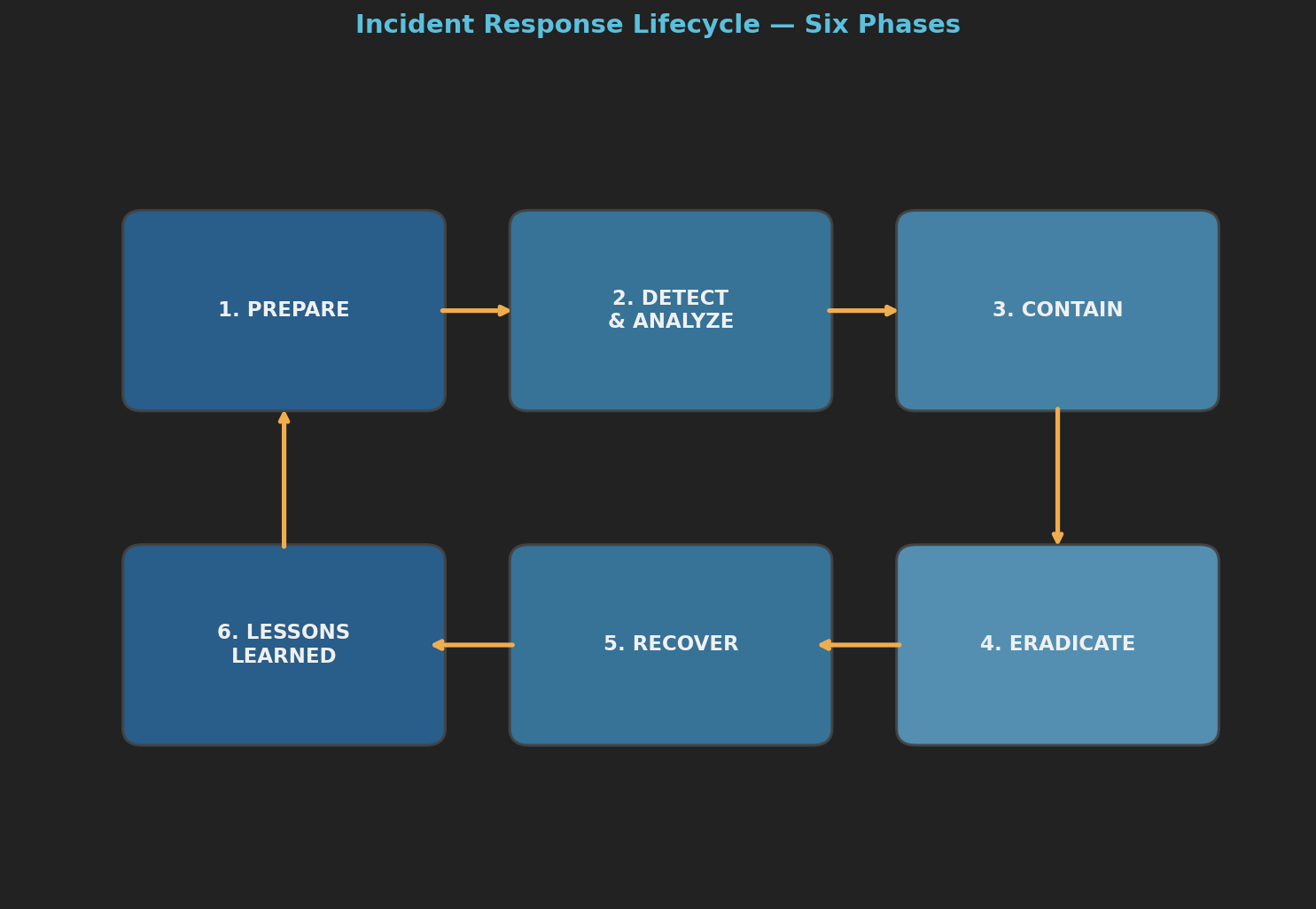

The incident response lifecycle consists of six phases [11, 5].

Preparation establishes policies, tools, training, playbooks, and communication plans. Detection and analysis uses log monitoring, SIEM alerts, and anomaly detection to classify and triage incidents. Containment isolates affected systems and preserves evidence through both short-term and long-term strategies. Eradication removes malware, closes vulnerabilities, and resets credentials. Recovery restores systems, verifies integrity, and monitors for re-infection. Finally, lessons learned drives post-incident review, root cause analysis, and procedure updates — feeding improvements back into the cycle.

Effective incident response follows a tight cycle: observe events across your technology estate, correlate them to identify actual incidents, decide on a response strategy, and act to contain and remediate [14]. The faster this cycle turns relative to the attacker’s pace, the less damage they can inflict. Organizations where detection is slow, analysis is fragmented, or decision authority is unclear give attackers free rein.

Assume you will be breached, and invest as much in detection and response as in prevention [5]. Speed of detection matters more than strength of prevention — dwell time is the key metric. Contain first and attribute later. And communicate honestly, because cover-ups damage trust more than the breach itself.

5.4 Assessments and Issue Management

Assessments evaluate whether controls are actually effective [14]. Three primary types complement each other. Risk and Control Self-Assessments (RCSA) have business units evaluate their own controls — this builds first-line ownership but may lack objectivity. Penetration testing has technical experts attempt to defeat controls under realistic conditions. And audits provide independent third-line verification of compliance and effectiveness. Assessments must be systematic, recurring, and tied to the overall risk management framework — not performed as isolated exercises.

When assessments identify gaps, a structured issue management lifecycle ensures they get resolved [14]. Issues are identified, documented, analyzed for root cause, assigned remediation owners and deadlines, tracked to completion, and verified as closed. Issues must be prioritized based on risk, and organizations need formal processes to ensure they don’t languish unresolved. When remediation is not feasible, formal risk acceptance (see Section 5.2) applies.

5.5 Security Metrics

What gets measured gets managed — but only if the measurements are meaningful [14]. Security metrics fall into three categories. Key Risk Indicators (KRIs) signal increasing risk — for example, the number of unpatched critical vulnerabilities trending upward, or an increase in phishing attempts targeting the organization. Key Control Indicators (KCIs) measure whether controls are working — such as the percentage of systems with current patches, or the time to revoke access for departing employees. Key Performance Indicators (KPIs) track overall program performance — mean time to detect incidents, percentage of systems covered by threat modeling, or training completion rates.

Metrics must be meaningful to their audience. Technical metrics (patch currency, vulnerability counts) serve practitioners. Business-aligned metrics (risk exposure in financial terms, compliance posture, incident cost trends) serve executives and boards. A metric that nobody acts on is waste; a metric that drives the wrong behavior is worse than waste.

5.6 Compliance and Regulatory Integration

Compliance is a floor, not a ceiling [3, 11]. Meeting regulatory requirements is necessary but not sufficient for security. Every control should reference which requirements it satisfies, and organizations should regularly compare current controls against regulatory requirements through gap analysis. Audit findings should improve processes, not just help pass audits. Regulations rarely require everything needed, so controls should be layered beyond minimum requirements. Privacy must be built into systems from the start, not as an afterthought. And compliance decisions and rationale must be documented.

Common frameworks to integrate include GDPR for privacy and data protection, SOC 2 for service organization controls, ISO 27001 for information security management, the NIST Cybersecurity Framework for risk-based approaches, and industry-specific standards such as PCI DSS, HIPAA, and NIS2. No single standard is sufficient — organizations must integrate multiple frameworks to achieve comprehensive coverage [14].

6. Layer 4: TECHNOLOGY — Architecture, Cryptography, Access Control, and Monitoring

The technical HOW.

6.1 Security Engineering Framework

Security engineering rests on four pillars [8]. Policy defines what we are trying to achieve — access control rules, classification levels, acceptable use. Mechanism specifies how we enforce the policy — encryption, firewalls, biometrics, audit logs. Assurance establishes how we know the mechanism works — through formal verification, penetration testing, code review, and certification. And incentive addresses why people will comply — through liability, insurance premiums, reputation, and regulation.

Most security failures are not mechanism failures. They are policy failures (wrong rules), assurance failures (untested assumptions), or incentive failures (no reason to comply). Technology alone addresses only one of four necessary pillars.

6.2 Controls Hierarchy and Design Principles

Controls follow a hierarchy from abstract to concrete [14]. Policies set strategic direction (“all data at rest must be encrypted”). Standards define requirements (“AES-256 with keys stored in an HSM”). Processes describe workflows (“the key rotation process runs quarterly”). Procedures specify step-by-step instructions (“log in to the HSM console, select Key Management, …”). Guidelines offer recommendations where flexibility is acceptable (“prefer Ed25519 for SSH keys”).

Underpinning all controls are foundational security design principles [14, 8]:

- Fail safe defaults — when a control fails, it should deny access rather than grant it

- Complete mediation — every access to every resource must be checked against the access control mechanism

- Least privilege — every subject should operate with the minimum set of privileges needed for the task

- Separation of duties — no single person should control all aspects of a critical transaction

- Economy of mechanism — keep the design as simple and small as possible; complexity is the enemy of security

- Least common mechanism — minimize shared mechanisms between users, reducing the risk that one user’s actions affect another

- Psychological acceptability — security mechanisms must be easy enough to use that people will actually use them correctly

These principles are not aspirational — they are engineering requirements. Violating them creates predictable vulnerabilities.

6.3 Zero Trust Architecture

Traditional perimeter-based security assumes that everything inside the network is trusted. Zero Trust abandons this assumption entirely: never trust, always verify [14]. Every request — regardless of origin — must be authenticated, authorized, and encrypted. Access decisions are made per-request based on identity, device health, location, and behavior, not on network position.

Zero Trust is not a product to purchase but an architecture to adopt. It requires strong identity management, micro-segmentation, continuous monitoring, and the assumption that any component may already be compromised. It aligns naturally with the assume-breach mentality that runs through this framework.

6.4 Formal Access Control Models

Three formal access control models have shaped how we think about authorization [8, 9]. Bell-LaPadula (1973) protects confidentiality with the rule “no read up, no write down” — subjects cannot read above their clearance or write below it, preventing downward leakage of classified information. Biba (1977) is its integrity complement: “no read down, no write up” — subjects cannot read below their integrity level or write above it, preventing upward corruption. Clark-Wilson (1987) takes a commercial approach, enforcing well-formed transactions where all data modifications go through validated transformation procedures with separation of duty.

Each model has its strengths and blindspots. Bell-LaPadula ignores integrity; Biba ignores confidentiality; Clark-Wilson is complex to implement at scale. Most real systems combine elements of all three. A banking system, for instance, needs Bell-LaPadula for customer data confidentiality, Biba for transaction integrity, and Clark-Wilson for well-formed transactions with separation of duties.

6.5 Cryptographic Foundations

Kerckhoffs’ Principle states that a cryptographic system should be secure even if everything about the system, except the key, is public knowledge [7]. Security must reside in the key, not in the secrecy of the algorithm.

The core building blocks include symmetric encryption (AES, ChaCha20) for confidentiality using a shared key, asymmetric encryption (RSA, ECC, Ed25519) for key exchange and digital signatures using public/private key pairs, hash functions (SHA-256, SHA-3, BLAKE2) for integrity verification, digital signatures (RSA-PSS, ECDSA, EdDSA) for authentication combined with integrity and non-repudiation, key exchange protocols (Diffie-Hellman, ECDH) for securely establishing shared secrets over insecure channels, and MACs (HMAC-SHA256, Poly1305) for integrity and authentication using shared keys.

Key management is where cryptography meets operations. Keys must be generated using cryptographically secure random number generators, stored securely using HSMs or key vaults, distributed through authenticated exchange protocols, rotated on a regular schedule, and revocable immediately when compromised. The full lifecycle runs from generation through distribution, storage, use, rotation, and finally destruction.

These building blocks are assembled into standard protocols: TLS 1.3 for encrypted web communications, SSH for secure remote administration, IPsec for network-layer encryption, the Signal Protocol for end-to-end encrypted messaging, and WireGuard for modern VPN connections.

6.6 Device Design Principles

For IoT and connected devices, ten design principles provide essential guidance [4]. Devices should be secure by default with no user configuration required. They should provide forward security, designing for future threats rather than just today’s. When security fails, devices should fail predictably and safely, entering a safe state. Users should know what data is collected and how it is used — transparency is non-negotiable. Automatic, authenticated security updates must be provided for the lifetime of the device. Authentication failures should deny access, not grant it. Devices should use standard protocols rather than inventing proprietary security. The attack surface should be minimized by disabling unnecessary features. Third-party security testing should be supported and encouraged. And designers should anticipate misuse, designing for adversarial conditions rather than just intended use.

Seven complementary data security principles apply to the data these devices generate [4]: minimize collection, protect what you collect, be transparent about what and why, limit use to the stated purpose, delete data when no longer needed, let users take their data with them, and notify affected parties promptly when breaches occur.

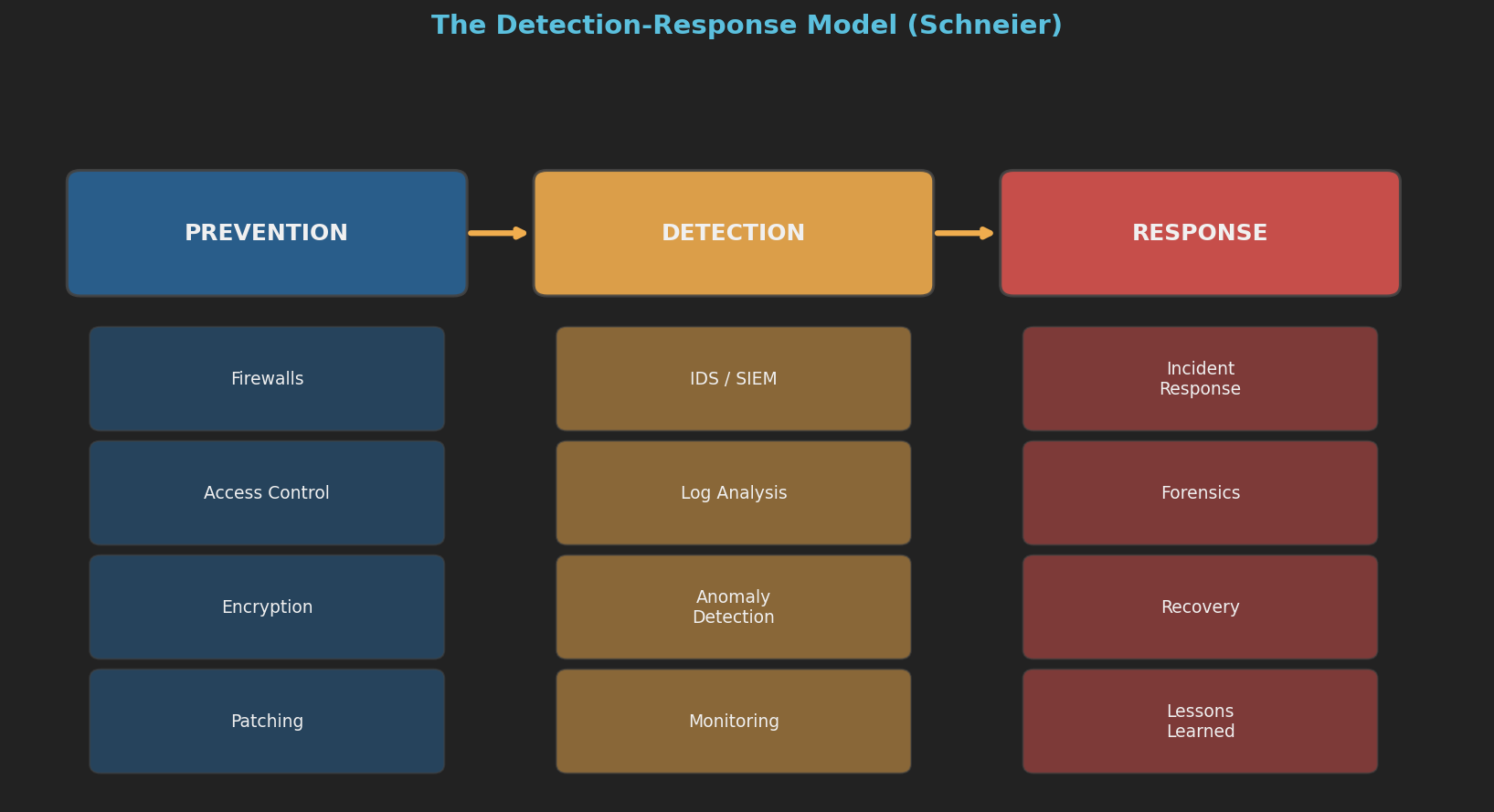

6.7 Security as Process: Detection-Response Model

Technology alone cannot provide security — security is an ongoing process [6]. Complexity is the fundamental enemy: every line of code is a potential vulnerability, every feature increases attack surface, and every integration point is a potential failure mode. Simplicity is itself a security property.

Defense in depth layers security controls so that failure of one does not mean total compromise. The perimeter layer deploys firewalls, DMZs, WAFs, and DDoS protection. The network layer provides segmentation, VLAN isolation, monitoring, and zero trust architecture. The host layer handles OS hardening, endpoint detection, and patch management. The application layer covers input validation, authentication, authorization, and secure coding. The data layer implements encryption at rest and in transit, data classification, and DLP. And the human layer — often the most critical — addresses training, awareness, social engineering resistance, and MFA.

Invest in detection and response, not just prevention. Prevention eventually fails; the question is whether you detect the failure quickly and respond effectively.

7. Cross-Cutting Concerns

These concerns thread through all four layers and cannot be addressed at a single level.

7.1 Privacy

Privacy is not a compliance activity confined to Layer 3 — it is a cross-cutting concern that must be addressed from strategy through technology [3, 12]. At the strategic level, privacy functions as a fundamental right and trust enabler. Organizationally, it requires a dedicated Privacy Officer role and a privacy-aware culture. In processes, it calls for LINDDUN threat modeling, privacy impact assessments, and data subject rights management. And at the technology level, it demands encryption, anonymization, pseudonymization, and data minimization built into the architecture.

The data minimization principle is worth repeating: the best protection for data is to not have it. Every piece of collected data is a liability.

7.2 Economics

Security economics shape outcomes at every layer [6, 8]. Breaches impose costs on third parties who have no control over the insecure system. Information asymmetry means buyers cannot assess security quality before purchase, making certification and audits necessary. Moral hazard leads insured parties to take more risks. And security theater — visible but ineffective measures — wastes resources and creates dangerous false confidence. Countering these economic dynamics requires evidence-based security metrics and red team validation.

7.3 Human Factors

Most security breaches involve a human element [8]. Unusable security is bypassed security — controls must work with human behavior, not against it. People are trained to be helpful, and social engineering exploits precisely this trait. Too many passwords lead to password reuse and weak passwords, making SSO, passwordless authentication, and MFA essential. Too many security alerts cause alert fatigue, where critical warnings get ignored alongside the noise. And trusted insiders, who have both access and potential motive, require controls like least privilege, separation of duties, and behavioral monitoring.

The principal failure mode of security systems is not that the crypto is broken or the firewall misconfigured. It is that the humans using the system behave differently than the designers assumed. Security mechanisms must be psychologically acceptable [14] — easy enough that people use them correctly, rather than working around them.

7.4 Resilience and Adaptability

Resilience requires designing for failure — assuming every component will eventually be compromised and building systems that degrade gracefully [2, 13]. Organizations must evolve their security faster than adversaries evolve their attacks, just to maintain the status quo; standing still means falling behind. The best security programs actually improve from stress, with each incident response strengthening overall defenses rather than merely restoring the previous state.

Security architecture and governance must coevolve together; changing one without the other creates gaps that adversaries will find. Modular architectures allow components to be updated independently without cascading failures. And continuous patching extends beyond software — organizational rules and processes need patching too.

7.5 Continuous Improvement as a System Property

The framework is not a one-time implementation but an evolving system [14]. Threats change, technology changes, business objectives change. Assessments reveal gaps, incidents reveal failures, metrics reveal trends. All of this information must flow back through the layers — from technology observations to process adjustments to organizational changes to strategic reappraisal. Organizations that treat cybersecurity as a project with a completion date will find their security posture decaying from the moment they stop investing.

8. Maturity Assessment Model

8.1 Five-Level Maturity Table

| Level | Strategy | Organization | Processes | Technology |

|---|---|---|---|---|

| 1 — Initial | No security strategy; reactive to incidents | No defined security roles; ad hoc responsibility | No formal processes; firefighting mode | Basic perimeter security; unpatched systems |

| 2 — Developing | Security acknowledged as important; basic policy exists | CISO appointed; security team forming | Basic risk assessment performed; incident response plan drafted | Firewalls and antivirus deployed; some patching |

| 3 — Defined | Documented strategy with risk appetite; privacy policy in place | Three lines of defense established; clear role assignments; training program running | Threat modeling and formal risk management in place; incident response tested; assessments recurring | Defense in depth implemented; access control models applied; controls hierarchy documented |

| 4 — Managed | Strategy includes trust model and ecosystem governance; metrics-driven | Security culture embedded; cross-functional collaboration; incentive alignment | Continuous threat modeling; automated compliance; risk and performance dashboards; issue management lifecycle | All four pillars (policy, mechanism, assurance, incentive) operational; Zero Trust adopted; cryptographic hygiene |

| 5 — Optimizing | Adaptive governance; security as competitive advantage; industry leadership | Organization evolves faster than threats; security champions everywhere | Predictive threat intelligence; automated response; fast detection-response cycles; privacy-by-design standard | Device design principles standard; architecture and governance evolve together |

8.2 Self-Assessment Checklist

Layer 1: Strategy

Layer 2: Organization

Layer 3: Processes

Layer 4: Technology

9. Implementation Roadmap

Phase 1: Foundation (Months 1–3)

| Activity | Layer |

|---|---|

| Define risk appetite and risk tolerance with board input | L1 |

| Conduct initial risk assessment | L3 |

| Define security strategy and trust model | L1 |

| Appoint CISO and establish three lines of defense | L2 |

| Define clear role assignments for key security processes | L2 |

| Establish incident response plan | L3 |

| Deploy foundational security controls (firewall, AV, patching) | L4 |

| Conduct security awareness training | L2 |

Phase 2: Core Processes (Months 4–6)

| Activity | Layer |

|---|---|

| Implement threat modeling program (STRIDE + MITRE ATT&CK) | L3 |

| Formalize risk management methodology | L3 |

| Establish issue management lifecycle | L3 |

| Align organizational structure with architecture | L2 |

| Implement defense-in-depth architecture | L4 |

| Document controls hierarchy (policies → procedures) | L4 |

| Deploy SIEM and logging infrastructure | L4 |

| Begin compliance mapping | L3 |

Phase 3: Technology Hardening (Months 7–9)

| Activity | Layer |

|---|---|

| Implement formal access control models | L4 |

| Begin Zero Trust architecture adoption | L4 |

| Cryptographic review and key management program | L4 |

| Apply device design principles to IoT assets | L4 |

| Conduct red team exercise and penetration testing | L3/L4 |

| Implement privacy-by-design in development processes | L3 |

| Test incident response plan with tabletop exercise | L3 |

Phase 4: Metrics and Maturity (Months 10–12)

| Activity | Layer |

|---|---|

| Implement security metrics dashboards (risk, control, and performance indicators) | L3 |

| Integrate LINDDUN privacy threat modeling | L3 |

| Launch recurring self-assessment and audit program | L3 |

| Establish adaptive governance mechanisms | L1 |

| Evaluate security posture against maturity model | All |

| Plan coevolution roadmap for architecture and governance | L2/L4 |

| Board-level security posture report | L1 |

Ongoing

Threat modeling should be continuous for all new systems. Risk should be reassessed quarterly. Strategy should be reviewed annually to incorporate new threats. The organization must evolve faster than its adversaries — standing still means falling behind — and post-incident improvements should feed back into all layers. The framework itself must be treated as a living system, not a completed project [14].

10. References

| # | Title | Author(s) | Year |

|---|---|---|---|

| 1 | Liars and Outliers | Schneier, B. | 2012 |

| 2 | A Hacker’s Mind | Schneier, B. | 2023 |

| 3 | Data and Goliath | Schneier, B. | 2015 |

| 4 | Click Here to Kill Everybody | Schneier, B. | 2018 |

| 5 | We Have Root | Schneier, B. | 2019 |

| 6 | Secrets and Lies | Schneier, B. | 2000 |

| 7 | Applied Cryptography | Schneier, B. | 2014 |

| 8 | Security Engineering (3rd ed.) | Anderson, R. | 2020 |

| 9 | Security Engineering (2nd ed.) | Anderson, R. | 2010 |

| 10 | Security in Computing (5th ed.) | Pfleeger, C. & Pfleeger, S. | 2015 |

| 11 | Security in Computing (6th ed.) | Pfleeger, C. & Pfleeger, S. | 2023 |

| 12 | Threat Modeling | Shostack, A. | 2014 |

| 13 | Platform Ecosystems | Tiwana, A. | 2014 |

| 14 | Stepping Through Cybersecurity Risk Management | Bayuk, J. | 2024 |

Framework synthesized from the security literature and experience. Last updated: 2026-02-11.